Viewed 17376 times | words: 11202

Published on 2021-04-22 22:00:00 | words: 11202

This post has a curious story.

It started actually long ago, as I was planning a follow-up to a couple of books that I published in the past #BYOD, i.e. using your own devices also for business and #SYNSPEC, i.e. how to attract, develop, retain talent in our "liquid organizations" times.

Underlying all that, of course, our society where gradually we are getting used to disclose data that are absolutely irrelevant to the purpose that they are being asked for.

Or, even worse, are relevant for the purpose, but only if used "illico et immediate"- instantaneously and then forgotten, and instead take another meaning if stored, piled up across time, and connected with data about other people, organizations, events, to generate "profiles".

Yes, on that too, as my first business experiences on data privacy where in the late 1980s on a banking general ledger project, published a book (and few articles)- a main selection is my GDPR page, but you can also search under "privacy" or "gdpr" within my website robertolofaro.com

Now, I think that you write about something, your should have some "depth", i.e. you should know more than what you write (directly through study and experience, or indirectly by extracting experience from others).

Anyway, today, 2021-04-22, I have two news items to share, from today's Italian newspapers:

1) the reported first step from Karlsruhe on the EU measures

2) the release of the new draft of the Italian PNRR.

As I wrote in previous articles, in Italy we still refer to the "bundle" of #NextGenerationEU, measures toward resilience also in the new budget, and the 2% for health-related measures due to COVID19 (from the ESM, in Italian called MES) under the overall name "recovery plan".

Obviously, the first includes both the "recovery plan" and the plans for restructuring and improving EU Member States resilience and convergence (three balls in the air).

As for the latter... it is still a political contentious element.

Anyway, in this article, focused on a specific theme that is often forgotten but is unforgiving, fostering a corporate continuous learning culture, I will comment #NextGenerationEU and #PNRR only indirectly.

You can search this website for other articles specifically on the former and the latter, while I shared in December 2020, within a book discussing few cases of Italian bureaucracy as case studies, also a chapter on both, that you can read online.

For this article, I decided to split it into few parts:

- a shared introduction (the one you just read)

- two parallel personal and business sides

- a shared conclusion.

As represented in this "article roadmap":

This is the business side.

Sections in this article:

_adapting, adopting, evolving

_what is the title about

_nine islands of knowledge

Then, would be time for a long personal digression on my own application of continuous learning.

But, on the business side, would be fine only within a book, not on a relatively "faster to read" article.

Therefore, if interested, you can read it here.

Otherwise, you can simply continue with the other section on this "business side":

_corporate learning: swarms or lemmings?

_thinking outside the organizational boundaries

_it takes an ecosystem of unknowns and antennas

_using #NextGenerationEU to foster a new ecosystem

As I wrote above, the conclusions are shared:

_conclusions and thinking creatively about virtualization of personal work presence

Adapting, adopting, evolving

Today is Earth Day, so I added some more "trends listening" on both the IT and Business side, respectively on "going green" and "embedding ESGs".

It is one of the positive sides of this COVID19 era: in the past, I should have been in Frankfurt for the former, and probably listening into the the latter, from EBRD.

The point is not to boast about what I am following, it is that, if you have some background and experience in some industries, also if you are not required to collect "learning points", it is now feasible to do something more than read a few pages here and there, published maybe years after the fact as a "case study".

My business background includes both IT and the business side (data, organization, controlling, change), and in the past keeping up-to-date implied both costs and having a network where I was both a cross-industry "antenna" for others, and had "antennas" for each sector.

Trouble was: I always received "pre-digested" information, i.e. "filtered" through the eyes, ears, minds of those that shared information with me.

And the same, of course, applied to those asking me: mediated information could imply both distortion and biases (on purpose or just due to a kind of "Indetermination principle of mediated information"- the observer distorts the reporting on the observation), as well as sheer un-awareness of what could be of interest (or could be up to) the audience of your "storytelling" of what you heard, saw, read.

So, also in the past, if you had looked at the "schedule" of my attendances of seminars, workshops, conferences, having worked in multiple industries and across various business domains, it seemed a jumble.

Until then it was needed.

Still, it was critical to avoid sharing much later what you already had received with some delay.

Anyway, my work since the late 1980s (and also my prior political activities and my service in the Army) required to understand cross-domain: therefore, the key was translating from one lingo to another.

And to be humble enough, whenever re-approaching a subject after some time, to accept that you had again to get through the basics.

As both in business and IT things do evolve: if you had asked about a calculator in the XIX century, probably they would have presented you a person who made computations by hand.

If you have asked for a computer programmer in the late 1970s, probably they would have introduced you to somebody working on COBOL and who knew just one language, one system, and used monitors, and who personal computing experience was to use a pocket calculator doing what, in the XIX century, had been done by people (and more).

My smartphone right now is probably much more powerful and able to do the same things I was doing with my 9kg, around 5,000 EUR (nominal value- current value much higher) Toshiba 5100 that I was given in the late 1980s by my company to work also outside the office on Decision Support System models and associated documentation and presentations.

So, we know have resources both for us personally and for our business activities that should enable more flexibility and easier unmediated access to expertise whenever needed.

What we still lack, in business, is often the organizational ability to do that in a productive way, i.e. adapting before adopting, and then building also the abilities "in house" to evolve, also by the famed "emergence".

This article has been sitting on my hard-disk so long (weeks) that...

...previous versions were lost-on-crash.

In the, end, a blessing in disguise, as an article evolved into the structure of a book.

Converging with material that I was preparing for the evolution of a previous book on human resources (specifically, experts) that I published few years back, #Synspec.

So, I will try to use the excuse of being interested in sharing some ideas inspired (or information derived) from further reports and webinars.

This article therefore will be both a "liaison" with previous material, and a "lead" toward future publications (articles and books).

Let's just say that continuous learning changed during the COVID19 lockdowns that started in March 2020 (at least in Italy).

And not just on the "qualitative" side that I hinted at above, also on the quantitative arena.

Many events that in the past required (paid or unpaid) registration and onsite presence, now became accessible with a mere free registration (or even just broadcasting via YouTube).

I saw over the last 12 months the gradual development of "formats"- interesting how many started in "broadcasting" format, and then evolved into something more interactive- also while using the same platform of others sticking to the "conference format".

Therefore, attending webinars and seminars online, at least those that before where available exclusively in presence, was both a learning and an organizational culture analysis opportunity.

Still ongoing.

As many are evolving.

We all saw the changes due to the unsynchronized lockdowns, disrupting supply chains (virtual and physical).

Anyway, many proposals to "adapt" to the new reality of less office work, more work from home, often sounded a little bit too "Big Brother"-esques, as many organizations introduced technologies, but did not redesign processes.

It was still common in the 1980s to have offices arranged in such a way that the cadre or manager was doing a "panopticon in sedicesimo" thing, i.e. continuously watching over the staff from his (as usually was a man) desk.

In more recent times, that was replaced by a less obtrusive "walking through" (that actually was the recovery of some management approaches to "flatten" the organization, from decades before).

Some recent proposals are really an implementation of Bentham's panopticon, with the observed knowing only that they can be continually monitored, but without knowing when or by whom, or sometimes even how.

Not really a motivational tool- better to improve processes and find other KPIs.

Hence, some companies, even some that are supposedly technological trend-setters, announced recently a return to the old in-presence.

Pity, as this gives a mixed message- but, anyway, better than what could amount to continuous monitoring as if Industry 4.0 sensors and digital twin approaches...

...were to be applied also to "human capital".

My hope is only that many companies will actually find time and opportunity to study what happened since early 2000s, as some have already done, to design a "new normal".

This could be the "blessing in disguise" of the current crisis- forcing to do as I had to learn to do whenever returning years later on a technology or industry: humbly look at the basics, avoiding too much "layering on the past", to find new ideas based upon current information, before then seeing how to "transition" from what was old, true, trusted, and a new "configuration" that embedded something new, retained something old, and phased-in something replacing something else that was to be phased-out.

It is part of continuous organizational learning, but should also motivate many companies to rethink their real organizational culture: if they recognize themselves into the temptations listed above, probably their real culture isn't as modern as they assumed.

This article, as I said above, is an outline of what will follow.

Therefore, I will focus on a main theme: how should corporate continuous learning evolve, considering the evolutions of business practices that were both part of digital transformation, and accelerated (and even innovated) during the COVID19 crisis?

Of course- beside ideas, I will discuss also some examples I heard of in business webinars, workshops, etc: my old "knowledge transmission" role.

Incidentally: you can have a look at my CV page to see what could be my "biases"- I also added two links to the courses and certifications that I followed on the two main IT areas (SAP and related business processes and industry 4.0, as well as data science), not as a show off (it seems a collection), but to show in which areas actually tried to understand how things are implemented now.

I do not think anybody following one or two of those courses to get certifications to have any operational abilities- it is not their purpose, in my view.

But they are fine for my target roles since 2012 (when I re-registered in Italy): PMO, program management, and related solution-level roles.

Moreover, if you take (as I did) courses across few years (first was in late 2017, latest is ongoing now), and following different business domains, you can see also the evolution of the architectural concepts, integration of technologies and approaches, and overall roadmap.

Useful, if you consider that a company choosing an ERP, or staying on it, is in for the long run- each ERP carries an "organizational culture" impact, and a large company is not into the business of redesigning its culture just for a "technological upgrade" or "planned obsolescence": once you decide to change, either on the same platform or to introduce new technologies or to shift to a different platform, you have to consider the phase-in and phase-out.

Many prefer to say "as is" and "to be", thinking in terms of architecture- I think in terms of implementation of the architecture and impacts on the viability of the underlying culture.

So, "layering" those training courses can deliver what I looked for: on the SAP side, an understanding of architecture and "embedded corporate culture" (as I presented ERP in the late 1990s to some customers); on the Kaggle/data science side, what are some of the basic building blocks.

Personally, on the data science I spent time before, during, after those courses and on my own projects, as it is an extension of what I did with business data since the 1980s, but trying to understand the potential without the mediation of "experts" that have a blatant conflict of interests (selling their own company's expertise, which in consulting too often implies having few experts, and plenty of projects to "clone" solutions on, to optimize revenue, instead of timing interventions and designing solutions tailored to the customer's needs).

On the SAP side... well, I do not have a SAP installation, and even if I had I do not have the capabilities to the generate the volume of transactions needed to do a meaningful "end-to-end" project.

So, I will stick to the "how current technologies are proposed by a leading supplier to their customers as embedded in its own solutions".

Which is, frankly, what I had learned in mid-1980s while working for a software development arm of Andersen, in my first automotive and banking projects.

On the data side: look at the DataDemocracy and CitizenAudit sides, as those (based on open data) I plan to release the results of my own projects done in my spare time, hoping to share something that could be reused by others.

As usual, anything I post online can be reused for free- I add a CC-BY-SA 4.0 clause here and there, but I do not waste time in enforcement, it is more to protect other users from cases such as some I had in the 1990s, with "freeriders" that then even went as far as patenting business processes lifted from a collection of websites (mine, prconsulting.com, included).

As I used to joke in the 1990s, luckily Plato and Aristotle did not have a similar patent system that shifts on the challenger the costs to prove invalid a patent, otherwise the whole of the Western civilization would not have developed.

Before the real article, I will resume an old tradition: first, I will explain and expand the title.

Then, few sections discussing a string of items.

The list of the latter is within the next section.

What is the title about

Let's start from the end.

I think that there is no need (at least while I am writing, in April 2021) to explain what COVID19 is.

But its presence within the title has a purpose: to disclose what I will not write about.

First, for the "technical" and "administrative" side of this issue, you can have a look at articles and materials that I share from verified sources, such as JAMA, on a weekly basis on Facebook and Linkedin.

Second, on the "political" side of the administrative (i.e. current), I share sometimes material, on the above mentioned social network profiles, but I already wrote various articles.

Actually, on 2020-05-05 shared few points about what would mean implementing distancing measures for the typical (i.e. small) café or restaurant in Italy.

The title? "It is safe? Loooking forward post-COVID19 #Italy #automotive #banking #retail #urbanization #industrial #policy #COVID19".

As you can see, I covered the main industries that are within my CV: automotive, banking, retail- and added urbanization and industrial policy.

The key connecting elements, in reality, is not COVID19, but... data.

Therefore, in this article, I think that I can safely skip talking about COVID19 impacts- there will be time in the future, and there is already plenty of material that I already shared.

I will discuss it as an element within a larger picture.

As for NextGenerationEU- shared in December 2020 both an outline of the plan from Brussels, and the Italian proposal called PNRR - Piano Nazionale per la Ripresa e Resilienza BookBlog20201223 It takes a (global) village - introducing the CitizenAudit book series.

Then, a couple of months later, in February 2021, shared an update on both: "Another step ahead/01 2021-02-19 #Italy #Government #COVID19 #NextGenerationEU", that included a preliminary analysis and shared a simple concept: do we really need, in Italy, to look forward to get the whole 209bln EUR from NextGenerationEU (I stick to the original 209, so that it is easy to look for references- the real amount will be set in Brussels by consensus, not in Rome)?

Well, it seems that recent excess demand on new debt issued by Italy, and statistics on the increase of cash left lingering on current accounts by citizens, shows that there are other possibilities.

In July 2020 shared a webapp on a law related to COVID19 expenditure but using only national resources, Legge 77/2020, focused on the timeline and "budgeting" of commitments.

That webapp included also a dataset on my Kaggle profile.

In March 2021 released a dataset containing a "catalogue" of the proposals for the Italian PNRR collected by Parliament until March 2021, that included also some documents from ministries.

The dataset is: PNRR NextGenerationEU in Italy - presentations / civil society presentations to the Parliament 2020-10-06 to 2020-03-18.

In this case too, I plan to release later a webapp, but focused on other issues, as the official PNRR will not be available until end April 2021.

As for continuous organizational learning, started publishing online in 2003-2005 (an online e-zine, reprinted with updates in 2013, as a mini-book, BFM2013).

Anyway, the section Business: Rethinking on this website is on the same theme, but spread across dozens of articles and a dozen mini-books, as the theme is a "systemic" theme.

Therefore, this article will be really focused on this theme, to connect all the previous dots: COVID19, NextGenerationEU, and Continuous Organizational Learning.

Incidentally: I published the first book in the "connecting the dots" series (five volumes, so far) years before a book with the same title was published- the more, the merrier!

And all the books in this series can be read for free online, if you are unwilling to buy a copy, as my current purpose in publishing is mainly to share knowledge and ideas for wider dissemination.

As you can see, this section is actually a kind of "navigation map" across previously published material, to select few "do you want to know more?" items.

Routinely, while living in Turin from late 2015 until early 2019, I met people who were just "testing the ground"- too lazy to read what was already online.

Well, to them, I would suggest to re-read just this article on "Seizing the industrial policy opportunity: competence centers and recovery plan in Italy - thinking systemically".

Or also, why not "#2020: three #twisted #reasons for a #positive #outlook for #Italy and #Turin".

In Italy, it is a routine to be called "anti-italiano" if you look at reality and talk "shooting from the hip", i.e. say what you see and what you think.

Our traditional attitude? Not to displease anyone, just in case. Or even, trendier in recent decades, to sound assertive, decisive, and then... hedge.

We have even a cultural figure of speech: "cerchiobottismo" (look online, explaining it would take too long- it means just that).

Therefore, we end up in an endless list of meetings and, whenever some mistakes in planning (not a mere change in reality) is revealed, mainly due to the too convoluted planning process that tried to "save the face" of everyone...

...we end up in an even larger and more convoluted "face-saving" exercise, unless you can procure a scapegoat.

Then, you wonder why it happens routinely that everybody has a clear understanding of what isn't working, but then, when it comes to implementation, tribal territorial instincts imply that we try to do as little as possible and say as little as possible that could impact on tribal balances, and hopefully achieve the main target: shifting the blame to another tribe.

Talking straight in Italy today will not buy any friends- but it is blatant that since early 2020 we did our worst in sorting out issues whenever a SNAFU arised (WWII jargon: "Situation Normal, All Fooled Up"- "fooled" is the official US Army cartoon title, the real meaning was what made French Connection UK trendy for a while, decades ago).

Procrastination might be an art- but this is neither the time nor the place to procrastinate.

Hence, my "three (twisted) reasons for a positive outlook" shared in January 2020.

If anything, the COVID19 crisis and the political chaos that ensued and resulted in the new Draghi Government should act as a "twisted facilitator".

I could say: trust me- on a smaller scale (luckily), but I had plenty of cases where I was asked to act as a "twisted facilitator" to recover a Gordian knot but without cutting it.

Simply- replacing it while pretending to be looking at it, and then helping to cut it when it had no more structural value.

It is feasible- if you have a coalition of the willing (tongue-in-cheek joke from a docudrama from Morris on post-9/11).

To help both myself and the lazy, as well as those that simply focused on specific themes, on this website in the past I added both "most read" and "search by tag cloud" features, and will add more "semantic" search features at a later stage.

Actually, those search features originated from my own personal need to sometimes pick up an old article, and continue a concept within a new article.

Once in a while, I was asked what was more "trendy".

Yes, I did toy with Google Analytics and other tools in the past, but then decided to simply dump everything, and, as my website is not a commercial website, to simply share with readers access to the same tools I used for myself- crude, but effective tools.

On the "trendy" side, for now (while waiting to release the "semantic" side), I added few days ago the simplest choice, a "latest read" feature showing the "Zeitgeist"- but avoided using the Z word, as it has been overused by conspiracy theorists...

What does it show? The most recently read 20 articles or pages (which is not a measure of frequency- it is a measure of time).

Now, one element within the title, Nine islands is still missing- albeit my Asian friends probably guessed what I am referring to.

Of course: looking at it within the context of Continuous Organizational Learning.

Nine islands of knowledge

Well, few days ago, as a hint, relaunched an article that I posted in September 2014, as part of a culture & language learning journey.

Why now? Because, as part of a knowledge update started when the first COVID19 lockdown began in March 2020, did what I had postponed for few years (at least since December 2017, when I purchased a neural-network-on-USB from Intel called Movidius).

Specifically, along with updates on the business processes side across the industries I worked on, and some other IT-related elements, also on the "number crunching" side.

And adding data science and its trendy side, Machine Learning- because, despite its inherent limitations, I can think of a long list of activities in my business number crunching days, as well as when collecting information to influence organizational (re)design when having these tools could have helped speed up and finding needles in haystacks that our own human biases filtered out, only to discover later on how were relevant as indicators of what was to come.

Anywa, interesting how many resources are now available for free- both software and, if you can post your data on a cloud platform, also hardware (I do not care if it is marked "private" or "public": moving data outside is still moving data outside).

But even more interesting how things evolved since the last time I spend time hands-on on AI, starting in the 1980s (before I started working) on PROLOG.

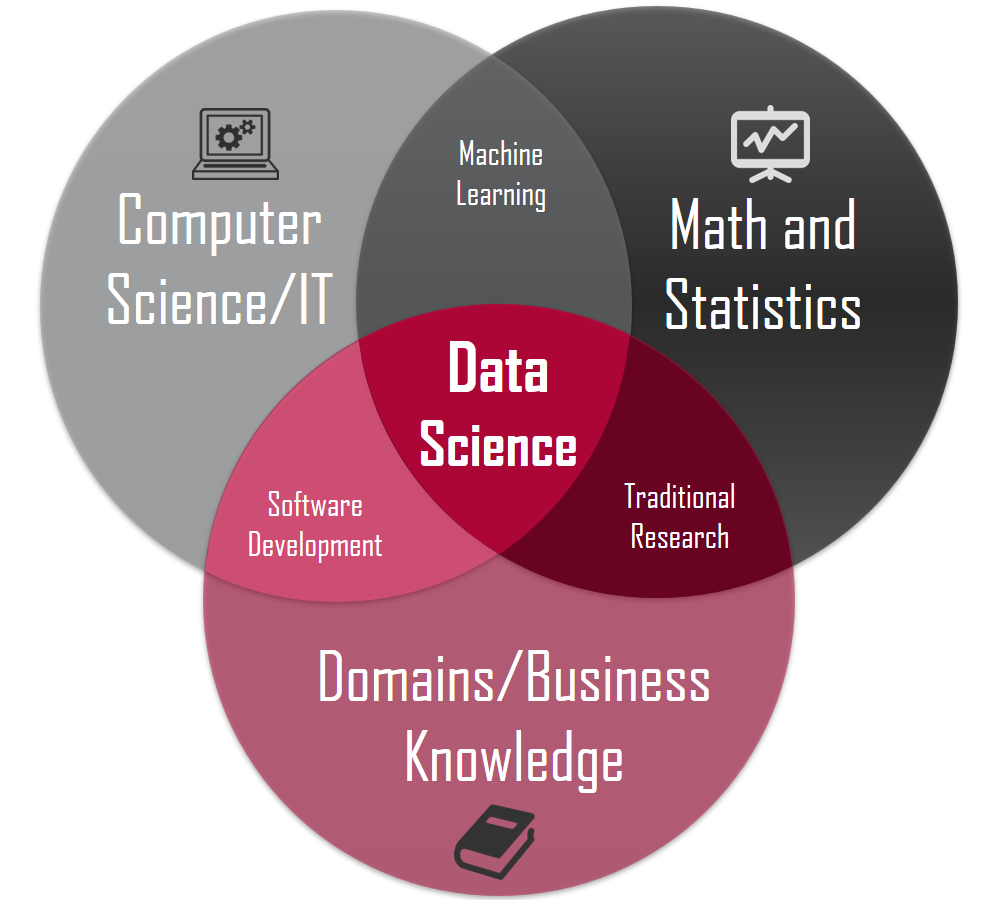

The most common element (with few variants) represented in all the books, courses, etc?

Something similar to this picture:

(source: "Why Data Science Succeeds or Fails")

Some descriptions went as far as dictating that a data scientists must excel in all the items that you see in this picture.

Personally, I partially agree with the article, but I shared the picture as it shows that you need a team, not a person- also if recruiters too are doing, in their support to customers, doing what they did with plenty of technologies and business activities in the past- ask for a "one person band".

Also if you have a catalyst "bridging" all of those roles, (s)he has to have some deeper understanding in at least one of the domains, and an understanding of at least the "forma mentis" and knowledge boundaries of the others- neither a full-time specialist focused on just one element, nor somebody having "surface level" of all can be a credible catalyst.

It is the difference, in my view, between a catalyst and a manipulator: the latter can motivate with fair weather, the former can keep motivating also in rainy days.

Actually, in most cases, considering that data initiatives often make sense only if they are timely, the critical element I observed (and I am talking about the "old", not Machine Learning etc.) is the usual: those with business knowledge should have a more active role.

Now, if you count the areas within the picture, there are just seven- so, the "nine islands" does not refer to that.

It was reference to a bit of the history of China, Yu the Great.

After WWII, until the late XX century, it was common to have some large businesses that "owned" all the processes, end-to-end, e.g. an automotive company owning also the mines where the raw materials were coming from, and the foundries turning into what they needed.

Already when I started to work officially, in mid-1980s, companies externalized processes, up to the point that e.g. in automotive suppliers of components became critical owners of both knowledge and processes.

And, in banking, derivatives were already showing in the 1990s a similar approach, even before e.g. the "too big to fail" crises (see e.g. Economic impact of the G20's too-big-to-fail reforms for links to what produced the need for these reforms).

Recently, I attended a webinar where companies described how COVID19 changed their perspective on risk.

As an example, they discovered that they did not know who were the suppliers behind their "Tier-1", also if, due to the various lockdowns scattered around the world, their business received significant impacts should something happen upstream.

Some companies, as I shared online few weeks ago, tried to integrate a more "systemic" approach, which implied a greater transparency across the whole supply chain.

Interesting how, even when apparently we were leaving the "first wave", and some of those companies assumed that they could go back to "traditional" approaches, they had decided to turn the new approach into the "new normal".

Hence, the "nine islands" approach.

Any initiative, including any initiative on data that aims to have a sustainable governance (i.e. long-term) needs to change.

Creating a "proprietary" ecosystem, an island that can happily survive whatever happens outside its walls, is not going to work.

Therefore, the point is to consider how many "islands" you will need to connect and monitor their interaction with your organization.

As discussed in another section of this article, it is a matter of using a concept I discussed many years ago and shared in few articles in the past, i.e. acting by "circles of influence" and "relative mass".

To visualize, consider your organization as if it were a soccer ball on a rubber plane: the ball will distort the surface.

What you know that you need to keep tab with, i.e. other "soccer balls", will be, from your perspective, smaller or larger "satellites" (depends on their influence on your choices), also if their source organization is much smaller or much larger than your own.

This is a first circle.

Then, you will have other circles, more distant, sometimes with more influence that your own closer smaller satellites, sometimes, despite their size, due to their "distance", less influence.

In pre-COVID19 times, this was more or less working, as anyway the contractual commitments of those "in external circles" helped those closer to you to, in turn, have commitments that could be managed.

In COVID19 times, you need a different approach, as changes can be more frequent and unpredictable.

Data alone do not solve any issue: you need a rationale for their use.

Again, in the past, this rationale could be almost self-referential: you defined what you needed, and therefore defined what you collected, exchanged, and then embedded in your decisions.

Personally, I think that shifting from business intelligence to business analytics, and shifting from mainly internally generated data as output of processes, to data generated both internally and from your own products and services interacting in the market was not solved by big data, data lakes, and the like.

Again, without a rationale, some data lakes are probably data swamps.

And developing a rationale on planned interactions is feasible, but if your product and services are "embedded" in a world where anything and anybody generates and collects or processes data (potentially also your own, and potentially influencing the behavior of your own "smart" products), using the old "centrally defined" rationale could still be feasible, but at a cost.

You will lose opportunities, and, as anybody generating data eventually has a way to "broadcast" those data (directly or via what some called "jail breaking"), you could actually be the one making investments generating benefits for other organizations.

Nine or nine hundred "knowledge islands" will anyway have to build bridges.

And building bridges, in a mutating environment, will require having a constant re-assessment of your real boundaries, real "influencers", and real "influences" (that you generate).

All this requires one element: continuous learning.

This is the right time for a long personal digression on my own application of continuous learning.

But, on the business side, would be fine only within a book, not on a relatively "faster to read" article.

Therefore, if interested, you can read it here: "Personal continuous learning in liquid times".

Or, instead, you can continue with a section about two concepts, within this context (corporate continuous learning), that I will discuss again, but looking at the (near) future, within the conclusions: swarms and lemmings.

Corporate learning: swarms or lemmings?

The "lemmings" I am referring to are not the real ones, but those from cartoons, that all blindly go in the same direction- over the cliff.

In my first job as a full-time employee, in 1986, my company was one of the software development arms of the consulting arm of Arthur Andersen, the audit partnership that was dissolved after Enron.

My colleagues from the main company had to study a certain number of units for each stage in their career.

I do not know if it was true or not, but lacking the prescribed number of "training points" was said to be considered an obstacle to promotion.

Still, I remember seeing a chart showing the "learning progression", i.e. how many units per hierarchical level.

Well, some team leads were creative, allocating course preparation time on projects, and "helping" team members to pass the entrance tests.

Then, some adjustment were made to avoid misallocation of time, as the concept was, of course, that by having studied for each "green unit", you had acquired the knowledge and forma mentis required for your level, and therefore you would have a "common lingo and understanding" to share with your colleagues potentially worldwide, should you be called to work on joint projects, converting a local or national pool of talent into a 70,000 strong community.

Thereafter, training started being Computer Based Training, and in some cases travel to attend the training (not for my unit, but for the consulting arm of Arthur Andersen) ceased to be toward Chicago, and instead was toward a couple of locations in Europe.

I was lucky, as for my activities on Decision Support Systems 1988-1990 I had access to the reason why I had taken that job instead of one at a software house that offered to hire me few levels up and with a significantly higher salary (actually, higher than the one that I would get by when my company in 1990, despite few raises with an "outstanding" rating of my performance- but I never regretted the choice).

Reason? Before my first job interview in 1986, I saw a glimpse of the huge library, as the door was open, and that made me choice- little I knew that I would have no right to access the library whenever I wanted, at my pay grade!

When, after a couple of years and a couple of critical projects (first in automotive, then in banking) with a quickly expanding set of tasks and responsibilities, accepted to be shifted to a new business unit focused on selling software packages and related services (consulting, training, auditing use by other Andersen units or customers, etc), including Comshare's Decision Support Systems, was an opportunity.

No, I was not promoted- but my job was.

I had to work across multiple industries, sometimes switching industry each day, and, to that end, I had full access to the library (and, I discovered later, some other benefits).

I attended also few formal courses, but held outside the company.

Across the decades, I designed and delivered courses and training curricula- both technical and non-technical.

Gamification entered training long before social networks- and I am not referring at just the "training points" I was told about in Andersen in the 1980s, but, with the advent of Computer Based Training, often costs were reduced by converting training sessions into... sitting-30-minutes-in-front-of-a-screen.

Which is not a bad idea per se, if e.g. you have to redo a task once a year, and therefore, right before doing it, you have to confirm that your skills are e.g. aligned with the current status of a process.

But sometimes "training" becomes a synonym of "scoring points", and the learning cycle becomes learn-dosomethingelse-learn-dosomethingelse etc.

Which is fine if you want to "inform".

But also if you were to fix your corporate continuous learning approach, to have both "pull" (request from the employees) and "push" (selected by the company), that would not be enough right now.

It would have been enough in, say, the 1990s- but not now.

It is a matter of swarms vs. lemmings.

Also if you purchase training curricula from a catalogue, procurement and delivery take time- and maybe you are buying what would have been relevant yesterday.

If you actually also have to create training from scratch, it can be months before you deliver something, and maybe further months before you deploy at scale.

In all cases, you are "pushing" what you already assumed to be needed.

Acting as a swarm instead implies that learning opportunities can also develop from the bottom, e.g. proposals to share knowledge after a new system is introduced in part of the organization, and could be useful in other parts.

I followed similar initiatives first in the 1980s on Decision Support Systems, then also on business processes, also across my network of contacts, i.e. across organizations that had no other connection than myself and a potential shared interest (from co-opetition, if in the same industry, to cross-disciplinary organizational learning, when something can be adapted in another industry).

The issue when moving beyond the corporate boundaries, both in co-opetition and co-marketing or shared use? Ownership not just of the Intellectual Property, but also of the evolution process.

Now, as discussed above, COVID19 is something more than a simple crisis with ripple-effects downstream, as it had been e.g. the impact on the electronics industry of the tsunami years ago.

In that case, the impacts were localized and "predictable" after the initial impact.

In this case, as shown e.g. by the continuous increase/decrease of the strenght and number of lockdowns and disruptions, companies that managed to actually have access to data across the whole supply chain, and not rely just on contractual obligations with their Tier-1 partners, introduced a different data-driven approach.

The key issue, in this case, is data not just about operations, but also about risks and impacts- and seen across time, not as a static check, but also as a trend.

It is information that, in the end, would convert the suppliers of many industries into a "cost-plus" supplier, akin to that used in military supplies to government for new weapons systems, and other similar activities.

This added transparency could create some issues- but will discuss it in a later section.

Instead, focusing on corporate continuous learning, this additional transparency opens up a completely different scenario.

A "swarm" learning approach covering a supply chain has another side-effect: some approaches could end up becoming industry standards (to avoid feeding just your competitors), unless your supply chain still contains exclusive suppliers.

The latter is something that in some jurisdictions is not advisable- as, de facto, if you want to switch suppliers, you have first to make them again not dependent from you for their turnover, or risk having to cover for their own management mistakes.

I routinely quote the case of an Italian company that was purchased by a major German customer to solve a similar issue, after the supplier generated negative impacts on a contract that the customer had with a car maker in Germany.

Imagine just your Key Performance Indicators (KPIs henceforth): usually, since the 1980s I saw almost all the KPIs focused on "what happens within our organizational boundaries".

In the 1990s, as part of a negotiation on a risk reporting platform, considering the data available within the Italian "Centrale dei Rischi", I actually proposed and discussed to try, for business banking customers (in Italy, most companies are small), to add also external data about industry etc, to "cluster".

The concept was to see not just the risk level, but also the risk level by industry, location, and some other organizational information that was already available in compulsory databases (Chamber of Commerce, etc).

I was just using my cross-industry experience, and extending a simpler concept that I had seen a decade earlier (connecting "ticker tape" information from Dow Jones to Executive Information Systems dashboards) to the data sources that I had observed in various industries.

So, it seemed creative or even exoteric, at the time, but I considered just a natural evolution.

It was in the late 1990s, and therefore there were few regulatory and technological issues that made that too expensive (no Internet, and interoperability), so I do not know if it was ever used.

When you have to enforce transparency to avoid a "butterfly effect", i.e. a disproportionate secondary impact of a minimal event far away along your supply chain, it makes also sense, once the data flows are in place, to see if what generates those data could be jointly improved, e.g. by altering processes or by providing additiong technology upstream, so that all the companies downstream can reduce their own "control" costs (or timelag embedded in their procurement to cope with unknowns).

Example of unknowns in the COVID19 era: in the past, it could have been "structural", i.e. in a way potentially "manageable" (say- a small landing area in your supplier for return parts).

Currently, could be a lottery- e.g. a temporary lockdown that for two weeks, with few days of warning, shuts down a plant elsewhere.

Yes, adding data improves risk management opportunities, but increases complexity and cross-dependencies.

If you consider this approach, corporate organizational learning implies something else.

Thinking outside the organizational boundaries

In some industries was already common before COVID19 (and even before digital trasformation such as when the first elements of "digital twin" were introduced).

The point was anyway to decide to involve suppliers in designing products.

Whenever a supplier is shared between multiple customers, moreover if the supplier is larger than many of its customers, also in non-physical industries it was common to have a degree of "standardization", i.e. it is the supplier that, across customers, tries to optimize its own processes by having e.g. documentation that is structured the same way (or reuses some common elements) across all its customers.

If you introduce full transparency across the supply chain, eventually a degree of "standardization" will have to emerge.

The difference vs. the past? Customers will not see just the end product (the "standard from the supplier"), but also all that contributes to it- obviously, a slightly more complex case of data integration and harmonization.

An interesting showcase of a similar approach, way beyond the "digital twin" developed for and by a specific company, and closer to what I could describe potentially as a "marketplace for the interoperability of digital twins", is Nvidia Omniverse.

Of course, if you are not into manufacturing, it might seem irrelevant.

But think instead what is required to have e.g. a digital twin of a fridge able to interact with the digital twin of your kitchen within the digital twin of your home.

Actually, could solve an old joke: in the past, buying a portable radio, you would get an electrical schema.

Instead, purchasing a new house, you had to use devices to map where wiring was in your new home, as nobody delivered a schema.

Now, imagine if your house were to be considered a "set", a "box" containing and interacting with multiple "digital twin components" (your home appliances).

If you want that to be scalable to multiple homes, and not just to "showcase" such as one I saw in The Netherlands years ago, then you need to have a set of rules that covers various aspects.

Including who owns what, remembering that a new version of the software within your fridge, unless keeps some consistency with the "signals" it shared before, might disrupt...

...the kitchen.

Down to our current reality and case discussed above: the Omniverse concept could be worth playing with but with somebody from business (e.g. supply chain), to observe the evolution of some elements (e.g. the basic services nucleus, the open portal connectors) that could actually be useful as a conceptual "shared resource".

The reason? While you talk with formal supply chains, i.e. suppliers with contracts, terms&conditions, you have anyway pre-defined mutual constraints.

Also without digging into the "blockchain" (basically, connecting elements in a "chain of trust") debate, or even its "smart contracts" side, if every device will have capabilities to generate/broadcast and receive/process data, again, we will need something more flexible that our current approach to contracts.

It takes an ecosystem of unknowns and antennas

In this long closing section, I would like to discuss two consequences, one at the cultural/organizational level, and one at the data/technical level.

the organizational culture impacts

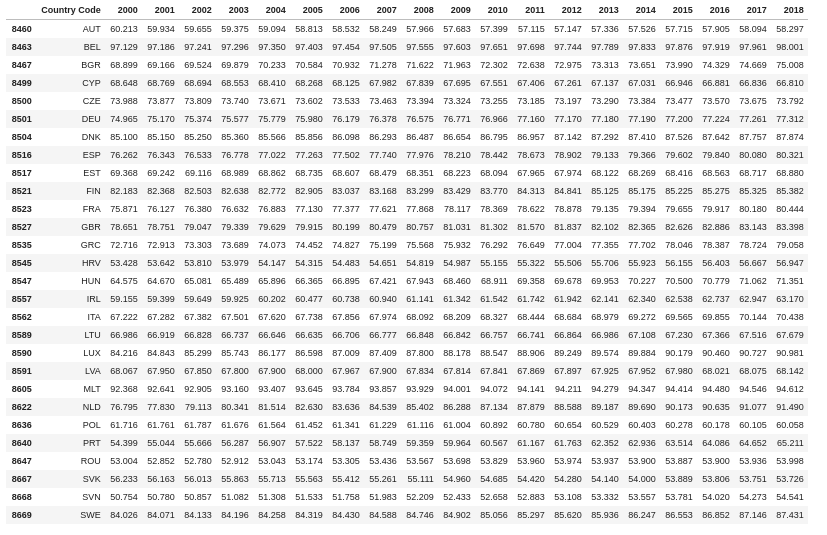

For all the discussions that COVID19 will kill urbanization, this is the recent status within the EU:

(source: notebook on Kaggle)

Do you really think that all that can be undone in just one year?

Your products and services will have to consider the urban environment and its forthcoming evolutions, such as the presence of "Edge" computing facilities, i.e. bringing computing resources closer to where data are generated and consumed.

So continous organizational learning will not be limited to corporate continuous learning and its associated web of formal agreements, even extended to both supplier and customers (and, of course, internal resources).

Once you introduce further flexibility through transparency, and extend that across the whole product and service design, development, delivery chain, probably you will realize that interactions within the market, suppliers as well as customers and, why not, even competitors, all will have at least an element of that "generate/broadcast and receive/process".

Some companies might be tempted to simply ignore this evolution, and follow the old approach.

The risk is that this new set of capabilities, and more potentially more flexible and continuous re-arrangements of knowledge, will open a further round of disintermediations, similar to those introduced by Google, Amazon, Facebook, Apple with their online and mobile services.

Since 2012, I attended in Italy various workshops, seminars, conferences about the future of business, integration of industry 4.0 elements (e.g. IoT, digital twin), and fintech.

Of course, until end 2019 were in person.

The interesting part in those in-person events was not just what was presented, but also how- the patterns of presentation, structuring of the narrative, etc- and the ensuing feed-back and reactions.

One element struck me back then: how many companies gradually evolved from industry-centric proprietary ecosystems, to cross-industry ecosystems- but still proprietary.

With the new data-based transparency across supply chains that was tested by some larger companies since the beginning of the COVID19 crisis, instead heard of more and more ecosystems started within an industry, and extending to other industries not through exchanges or connections, but through expansion of the products and services offering of the company that initiated that specific proprietary ecosystem.

As an example, earlier this week a Board Member of Audi in a webinar at the Nvidia GTC21 (a series of conferences presenting both what's new about Nvidia products and customer cases or presentation of potential future cases) quipped that if Google and Apple can enter the carmaking industry, then Audi can enter their market.

And others, in a different presentation (to discuss a report on the future of automotive mobility) talked of cars as if they were smarphones, and the key difference between the speeches of the different carmakers presenting was...

...the quantity of services that they will see as part of the future (for some, present) source of revenue- but, generally, convergence across this and other webinars on the industry was at 20-50% or more.

And they were not referring to the usual aftersales services (spare parts, etc.), but to data-oriented services, e.g. converting a moving autonomous driving vehicle into an entertainment platform-on-wheels, interacting with the environment it goes through.

So, imagine automaker X competing with Google on advertisement on vehicles through the on-board entertainment system.

Long ago, while I was in London, I remember supporting a colleague on a case study about a company that wanted to install computers on board on London taxis, to be used by customers.

Too early for the times.

As I quipped last week during a job interview, eventually cars too could become virtual bank branches.

And, why not, if, as I wrote long ago, 3D printers were to be distributed e.g. in urban centers, actually also logistics could change, at least for "standardized" and consumer products.

If you have just an ecosystem (your own) to monitor, you can probably rely on your own employees looking for signals of potential evolutions, new products, services, etc.

Instead, if you consider that your own products (not just cars) and customers, through interactions and exchanges of data, might actually continuously do for physical "smart" products what each user of smartphones or major social portal does everyday (help to identify potential new products or services), being there first could mean having a competitive advantage.

In this case, probably your own product delivered to a customer might be your own "antenna" on potentially useful interactions with other ecosystems.

I shared in 2018 an article on one issue with my birtplace: "retaining structural ability to cope with #complexity in #Turin".

When you have a complex system and streamline its complexity by scaling down, also if you pretend to retain the "knowledge supply chain" (R&D, universities, labs, etc) that supported the previous level of complexity, gradually it decays- knowledge does not exist in a vacuum, and if you do not use it, at best you can limit its decay, but, anyway, it turns into something lacking the "deployability".

If, then, you are suddenly to resume the ability to cope with the old level of higher complexity, unfortunately the risk is that, for a while, a kind of "cognitive dissonance" settles in.

In Italy, I observed it routinely- and in the 1990s also foreign colleagues and contacts (from USA and other countries) that had worked in Italy even before I started working in 1986...

...generally all eventually remembered when somebody said "we used to have the Roman Empire" or something more recent but equally out-of-touch (e.g. the role of towns in Renaissance in Italy).

Now, if that "cognitive dissonance" worked with something that those talking had no real experience thereof, you can imagine when instead, organizationally, they had experience.

A typical example? Decades ago, while working in banking outsourcing, some organizational bank managers told me that, few years after starting working in "full outsourcing" (all the system out) saw that they had lost the business knowledge needed to evolve their own system needs.

The reason, that I saw also in other industries across the years?

When you outsource, or even just stop to use some knowledge, in most cases the most experienced (and often most expensive) people that worked on what has been outsourced are either fired, shuffled elsewhere, or simply not replaced when they retire.

So, the company loses a "business domain antenna" that knew how to blend domain expertise with the specific organizational culture, and assess the impact of any change (or, also, of any decay).

Therefore, it might be tempting, considering the new, forthcoming "open ecosystem" model, to cut down your costs- but beside the formal knowledge, you will lose also all that informal knowledge that could be your own competitive advantage for future evolutions.

Obviously, this is different for a new organization.

Unfortunately, many of the companies "driving" the technological evolution have no experience of long-term knowledge continuity, and limited or no understanding of what this entails.

Look e.g. at how many products and services have been abandoned, simply with a short-term notice after enticing maybe major corporate customers to invest on those same technologies.

Now, the "data" side presents a different set of issues, apparently technical, but, frankly, easier to manage if you are used to a "marketplace" mindset.

the data and technical impacts

As often in my articles, "technical" means something more than just "related to technology".

When I write "technical", I mean- related to a specific domain or expertise, generally one that requires a long knowledge-transfer and "savoir fair development", not the usual half-a-day or one-week-and-you-are-certified approach.

During the Nvidia GTC21, and not just for the Omniverse, few repeated an issue: in many cases, it would make sense to aggregate data from multiple parties to generate more meaningful analyses, e.g. to remove bias in data (such as overrepresentation of a segment of the population).

The key constraint is that, if data have value, how do you share data to enable better analytics for everybody, but without disclosing data?

The concept is not trust on the content, but trust in readers and "channels" conveying the information from producers to readers.

As one of the panelist talking about the issue was from Italy, asked via Linkedin if they had considered using the Centrale dei Rischi (originally the central database on banking risk in Italy), as gradually the same approach had been extended from banks to other financial entities.

In my past consulting life, in the late 1990s, for the activity involving a UK-based company (the one I referred to previously in this article), we offered a solution on risk reporting and monitoring at the branch level.

During a negotiation with an Italian banking outsourcing company, I had to dig deeper into the Centrale dei Rischi functional specifications to be able to both deliver an English translation and map out the differences vs. the UK approach and its constraints, and discuss eventually, if the project were to be approved, the "convergence roadmap".

Now, the issue was similar to the one that I heard over the last few days: having competing parties contributing data to a shared datasource while avoiding that your competitors see your data.

The concept was solved as follows (to make it simpler, I will refer only to banks and streamline the concept and flow):

1. each bank contributes with an agreed format and frequency data about its own customers, referenced by a unique identifier by customer

2. the database can deliver the overall level of commitment for a customer, across all the banks reporting to the database

3. each bank can see this aggregate, but only its own details

4. to avoid "free riders", querying about somebody who isn't yet a customer implies a paying a fee.

There are further details (e.g. each bank might have multiple systems internally, but anyway, to send data and ask for information, each customer will have a unique identifier used in exchanges with the database).

I know- now many of my readers will started talking about blockchain etc.

But: this was long before, and blockchain has another purpose, while the key feature of this system was that Bank of Italy was trusted by all the contributing banks.

So, it was based on trust, centralized, and without data dissemination.

Now, move that toward "proprietary ecosystems": the same approach could still work, if the "manager" of the ecosystem will be a trusted third party.

Of course, if your ecosystem to be used for benchmarking etc instead requires all your suppliers to provide internal detailed information that is business confidential, and then stored along with that of their competitors that are also your suppliers (no large organization should ever single-source something critical)...

...the issue could be that suppliers would not be willing to share that level of information with you, if then you use the information as a negotiating tool to maybe involve a third supplier.

Asking data during a negotiation is fine, on those shortlisted (in the 1990s and early 2000s designed also Vendor Evaluation models in few domains).

Asking to provide continuously operational details would require having a third-party that is really independent and can ensure data segregation- also from yourself, to avoid conflicts of interests.

In a webinar on supply chain, a while ago heard a company that adopted a level of greater transparency across the whole supply chain, and then developed KPIs to better assess risks, but the way it was described was as a "proprietary" ecosystem, with a strong motivation for suppliers to be on board.

I wrote at the end of the previous sub-section that the new data "open ecosystem", if you want to be able to provide the value added that multiple contributors can provide, would benefit from a "marketplace" mindset.

The concept is: if your interest is to build a markeplace that enables a higher level of analytics that involves multiple sources, potentially usually competing with each other, you have to focus on the value added you create for your "customers".

Meaning: you should resist the temptation that some marketplace have, of having those managing it turn into "market makers".

Actually, in the late 1990s I said as much to one of the first online startups I supported (business and marketing planning): at least, build a "Chinese wall", i.e. structural separation.

If you are talking about a marketplace of, say, data collected across urban areas from vehicles from multiple suppliers, or companies and people moving around, and then try to use that information to influence the market, unless you start also to produce data that replace those data (not feasible with the concept I am referring to, i.e. every agent as both consumer and producer of data and analytics), it is just normal that, in a data-centric society where many have access to capabilities to "understand" what is going on, you will simply lose the sources.

And, as happened to other "marketplaces": not necessarily exchanging products or services outside the platform, also just excessive revenue-generation through "squeezing" membership and creating annoying advertisement or product placement could generate a flight toward other marketplaces.

Using #NextGenerationEU to foster a new ecosystem

Yes, these are the real conclusions.

Online, even before releasing my book that contained the chapter on #NextGenerationEU and the Italian PNRR in December 2020, advocated as a way to counterbalance streamlining of bureaucracy in Italy to use one simple approach.

More data transparency.

I know that some in business in Italy complain that there is already in some cases too much transparency- up to the point where you have to disclose information that potentially could be of use to competitors in future activities.

But, frankly, we are a country where organized crime has a stake on the GDP in two digits- and nobody really know about their impact on assets, as both during the 2008 crisis and since 2020 routinely news of attempts to take over cash-based businesses reminded old stories.

Of course, in a liquidity crisis (which, frankly, I saw already in Italy in part well before 2008), cash-rich organized crime aims to extend its access to assets that could both generate revenue, and be a useful conduit for money laundering.

Few days ago was announced that some minimal data will be published about infrastructure projects that will start soon- a good start, but still not enough to enable across the country the level of public scrutiny the allowed streamlining the rules to be applied while rebuilding the bridge in Genoa.

The "nine islands" concept could be useful also on these issues: local as well as regional and national authorities in Italy have to work with a maze of rules and regulations, but still in most cases release few "open data", and way too late to enable any further commercial use (or even to be used to pre-empt organized crime infiltrations at the first signs) .

At least in the 20 Metropolitan Areas (Turin, Milan, Rome are three of them) that have an internal staff and bureaucracy large enough to cope with this kind of projects, would be interesting to have pilot "data centric projects" to create "edge" computing resources and both collect and broadcast data, or enable experimenting with the kind of multi-company analytics that some companies tried during the COVID19 crisis.

As an example, integrating sensors distributed also in businesses, and trying new proximity services.

I shared in the past other examples, but the concept is simple: it is not just a matter of technology, it is a matter of building organizational cultures.

If leading towns were also able to build and enable access to their own "data ecosystem" at the local level, they could test approaches that are relevant within the Italian legal context, and could then be reused by other smaller local authorities.

Incentive? An extension of other experiments that e.g. are already ongoing in mobility to increase attractiveness for foreign businesses.

The new version of the Italian PNRR ("Piano Nazionale Ripresa e resilienza) will gradually add more details.

Also, could be interesting to have already in place a data collection system able to do benchmarking and analytics following the guidelines described above, again to enable (this time, at the national level) a data culture that could benefit also the State (and its citizens) indirectly, by enabling analyses also from the private sector reusing public data on PNRR projects, and identifying potential new services or products.

Again: continuous organizational learning does not apply just to private companies, but also to local, regional, national authorities, and their interactions with companies and organized groups of citizens.

Conclusions and thinking creatively about virtualization of personal work presence

In reality...

...it is up to you to derive your own conclusion and (hopefully) action plan.

Mine? Will be in future articles and within a forthcoming book...

... also if the title of this section is obviously a clear indication of a subject worth further thinking about.

_

_